The Internet offers many opportunities for connection, information, and commerce. However, this digital landscape also harbors a dark side: common online scam tactics that trick unsuspecting users into revealing personal information or parting with their money.

These scams can be sophisticated and persuasive; even the most tech-savvy individuals can fall victim.

This guide explores various online scam tactics, equipping you with the knowledge to identify and avoid them. By understanding these deceptive practices, you can confidently navigate the online world and protect yourself from financial loss and identity theft.

12 Common Online Scam Tactics

#1 Phishing Scams: The Bait and Switch of the Digital Age

Phishing scams remain one of the standard online scam tactics. Phishing emails or messages appear from legitimate sources, such as banks, credit card companies, or social media platforms. These emails often create a sense of urgency or fear, prompting you to click on a malicious link or download an attachment.

Once you click on the link or attachment, it might:

- Direct you to a fake website: This website may closely resemble the actual website of the supposed sender, tricking you into entering your login credentials, social security number, or other sensitive information.

- Download malware: The attachment might contain malware that infects your computer, steals your information, or holds your data hostage with ransomware.

READ ALSO: What Are Phishing Scams And How You Can Avoid Them?

How to Spot Phishing Scams

- Suspicious Sender: Be wary of emails or messages from unknown senders. Legitimate companies will typically address you by name.

- Sense of Urgency: Phishing emails often pressure you to act immediately, claiming your account is at risk, or there’s a limited-time offer.

- Grammatical Errors and Typos: Legitimate companies typically have professional email formatting. Phishing emails might contain grammatical errors or typos.

- Unfamiliar Links or Attachments: Don’t click on links or open attachments in emails from unknown senders. Verify the sender’s legitimacy before interacting with any content.

#2 Pharming Scams: A Deceptive Domain Disguise

While similar to phishing, pharming scams take a slightly different approach. Common online scam tactics in pharming involve manipulating your device’s DNS (Domain Name System) settings.

DNS translates website domain names into IP addresses that computers can understand. In a pharming scam, attackers redirect you to a fake website that looks identical to the legitimate one, even though you typed in the correct URL.

Once on the fake website, you might unknowingly enter your login credentials or other sensitive information, which is then stolen by the attackers.

How to Avoid Pharming Scams

- Bookmark Trusted Websites: Instead of relying on links in emails or search results, access websites directly through trusted bookmarks.

- Check the URL Carefully: Before entering any information on a website, scrutinize the URL for typos or slight variations from the legitimate domain name.

- Look for Security Indicators: Ensure the website uses HTTPS encryption (indicated by a lock symbol in the address bar) for secure communication.

#3 Tech Support Scams: The “We’ve detected a Problem” Con

Common online scam tactics often involve unsolicited calls or pop-up messages claiming to be from technical support. These messages might warn you of a virus infection on your computer or other security threats.

The scammer then offers to “fix” the problem for a fee, often pressuring you to grant remote access to your computer or purchase unnecessary Software.

How to Avoid Tech Support Scams

- Don’t Trust Unsolicited Calls: Legitimate tech support companies won’t contact you immediately.

- Verify Information: If you receive a call about a supposed computer issue, contact the tech support department of your Software or hardware provider directly to verify its legitimacy.

- Never Give Remote Access: Don’t grant remote access to your computer to unknown callers.

- Keep Software Updated: Outdated Software can have security vulnerabilities. Regularly update your operating system and security software to minimize the risk of malware infections.

READ ALSO: How To Detect Email Phishing Attempts (Like A Geek!)

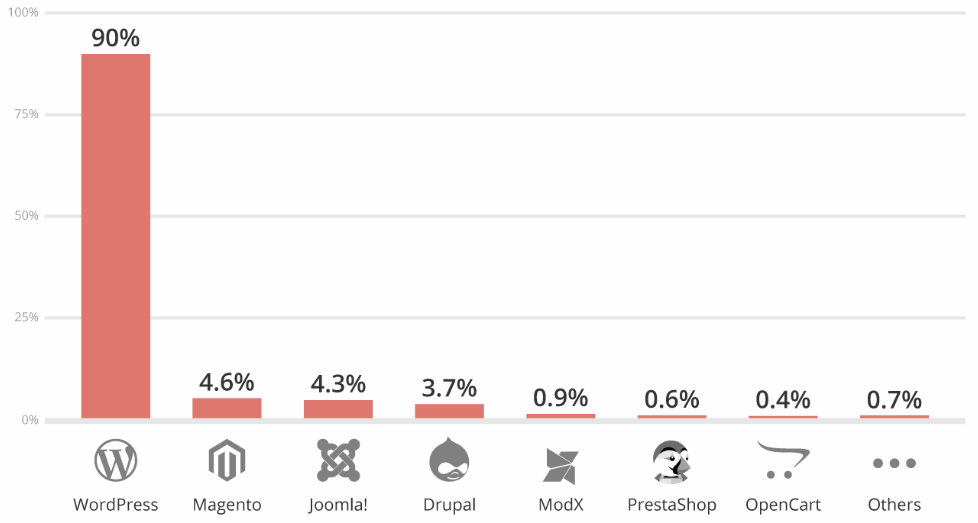

#4 Investment Scams: Promises of Quick Riches and Empty Pockets

The allure of easy money can be a powerful motivator, making investment scams a common online scam tactic.

These scams often involve unsolicited investment opportunities with unrealistic promises of high returns with little or no risk. The scammers might pressure you to invest quickly or use fake testimonials and endorsements to create a sense of legitimacy.

How to Avoid Investment Scams

- Be Wary of Unsolicited Offers: Legitimate investment firms won’t pressure you into investing quickly.

- Research Before You Invest: Never invest in anything you don’t understand thoroughly. Research the investment opportunity, the company involved, and its track record before committing any money.

- Beware of Guaranteed Returns: Promises of guaranteed high returns are a red flag. All investments carry some degree of risk.

- Check with Regulatory Bodies: Verify the legitimacy of the investment opportunity and the individuals promoting it with relevant regulatory bodies.

#5 Fake Online Stores: Bargain Basement Blues

The Internet offers many online stores, but not all are created equal. Common online scam tactics include fake online stores that lure you in with unbelievably low prices on popular brand-name items.

Once you place an order and pay, you may receive nothing, a cheap imitation of the product or even malware-laden Software.

How to Avoid Fake Online Stores

- Shop at Reputable Retailers: Stick to established online retailers with a good reputation.

- Check for Reviews: Read online reviews from other customers before purchasing.

- Beware of “Too Good to Be True” Prices: If a price seems suspiciously low, it probably is.

- Look for Security Features: Ensure the website uses HTTPS encryption and has a secure payment gateway.

READ ALSO: How To Identify And Avoid Online Gaming Scams

#6 Social Media Scams: Friends, Followers, and Phony Profiles

Social media platforms are a breeding ground for common online scam tactics. These scams can take many forms, such as:

- Fake friend requests: Scammers might create fake profiles pretending to be friends, family members, or celebrities to gain your trust and eventually ask for money or personal information.

- Impersonation scams: Scammers might impersonate legitimate companies or organizations on social media to trick you into revealing sensitive information.

- Social media contests and giveaways: Fake contests or giveaways on social media might promise expensive prizes but require you to share personal information or pay a participation fee.

How to Avoid Social Media Scams

- Be Wary of Friend Requests: Don’t accept friend requests from people you don’t know.

- Scrutinize Profiles: Look for inconsistencies in profile information and photos of fake profiles.

- Don’t Share Personal Information: Be cautious about what you share on social media, especially financial information or social security numbers.

- Verify Information: If you receive a message from someone claiming to be a friend or a company, contact them directly through a verified channel to confirm their legitimacy.

READ ALSO: How To Stay Safe Online During Black Friday LIKE A PRO!

#7 Dating and Romance Scams: Love in the Time of Deception

Dating platforms can be an excellent way to connect with people, but they also attract scammers who use common online scam tactics to exploit emotions.

These romance scams often involve the scammer building an online relationship with the victim, gaining their trust, and eventually manipulating them into sending money or gifts.

How to Avoid Dating and Romance Scams

- Beware of Early Declarations of Love: It might be a red flag if someone professes deep feelings for you very quickly.

- Be Wary of Requests for Money: Legitimate love interests won’t ask you for money online.

- Reverse Image Search Photos: If you suspect a profile might be fake, do a reverse image search of their profile picture to see if it appears elsewhere online.

- Meet in Person: Once you feel comfortable, arrange to meet in person in a public setting. Online relationships should eventually transition to real-life interaction.

One particularly damaging form of romance scam is the “pig butchering” scheme, where fraudsters cultivate a relationship over weeks or months before steering their victim toward a fake cryptocurrency investment platform. Victims are shown fabricated returns to encourage larger deposits, and by the time they try to withdraw, the funds and the scammer have vanished. Because these scams involve complex blockchain transactions that cross international borders, recovering stolen money without professional help can be extremely difficult. Victims who have lost funds through this type of scheme may want to consult a crypto scam lawyer to understand whether legal recovery options are available in their situation.

READ ALSO: Using Artificial Intelligence To Keep Your Financial Data Safe [Infographics]

#8 Work-from-Home Scams: The Elusive Path to Easy Money

The dream of working from home and earning a good income can be enticing, making work-from-home scams a prevalent online tactic.

These scams often advertise jobs with minimal effort required and high potential earnings. However, they may require upfront fees, involve pyramid schemes, or become illegal activities disguised as legitimate work.

How to Avoid Work-from-Home Scams

- Research the Company: Before applying for any work-from-home job, thoroughly research the company and the job description.

- Beware of Upfront Fees: Legitimate companies typically don’t ask for upfront fees for employment.

- Investigate the Job Description: Be wary of jobs that sound too good to be true or require minimal effort for high pay.

- Check with Regulatory Bodies: Verify the company’s legitimacy with relevant regulatory bodies, especially if the job involves financial transactions.

#9 Beware of Free Trials and Auto-Renewals

Many online services offer free trials to entice users to sign up. However, some common online scam tactics involve free trials that automatically renew

into paid subscriptions without proper notification. You might unknowingly incur charges if you don’t cancel the service before the free trial ends.

How to Avoid Free Trial Scams

- Read the Fine Print: Before signing up for any free trial, carefully read the terms and conditions, including the auto-renewal policy.

- Set Calendar Reminders: Set calendar reminders to cancel the free trial before it converts to a paid subscription if you don’t intend to continue using the service.

- Use a Separate Payment Method: Consider using a virtual credit card or a prepaid debit card specifically for free trials to avoid unintended charges.

#10 Fake Antivirus Software Scare Tactics

Common online scam tactics often involve unsolicited pop-up messages or websites warning you of nonexistent virus infections on your computer.

These fake warnings might pressure you to download and purchase supposedly essential antivirus software to remove the “threats.” However, the downloaded Software might be malware designed to steal your information or harm your computer.

How to Avoid Fake Antivirus Scams

- Don’t Trust Unsolicited Pop-Ups: Never download or install Software from pop-up messages or untrusted websites.

- Use Reputable Antivirus Software: Install a reputable antivirus program from a trusted source and keep it updated.

- Schedule Regular Scans: Scan your computer with antivirus software to detect and remove potential threats.

READ ALSO: How To Secure Devices Against Phishing Emails

#11 Beware of Scareware and Urgent Downloads

Common online scam tactics sometimes involve scareware tactics. Scareware is Software designed to frighten users into purchasing unnecessary Software or subscriptions. These tactics might involve fake virus warnings, pop-up messages claiming your computer is locked, or urgent prompts to download Software to fix a nonexistent problem.

How to Avoid Scareware Scams

- Don’t Download Under Pressure: Never download Software under pressure from pop-up messages or urgent warnings.

- Restart Your Computer: Restarting it might resolve the issue if it seems sluggish or displays unusual behavior.

- Consult a Trusted Technician: If unsure about a supposed computer problem, consult a trusted technician for assistance.

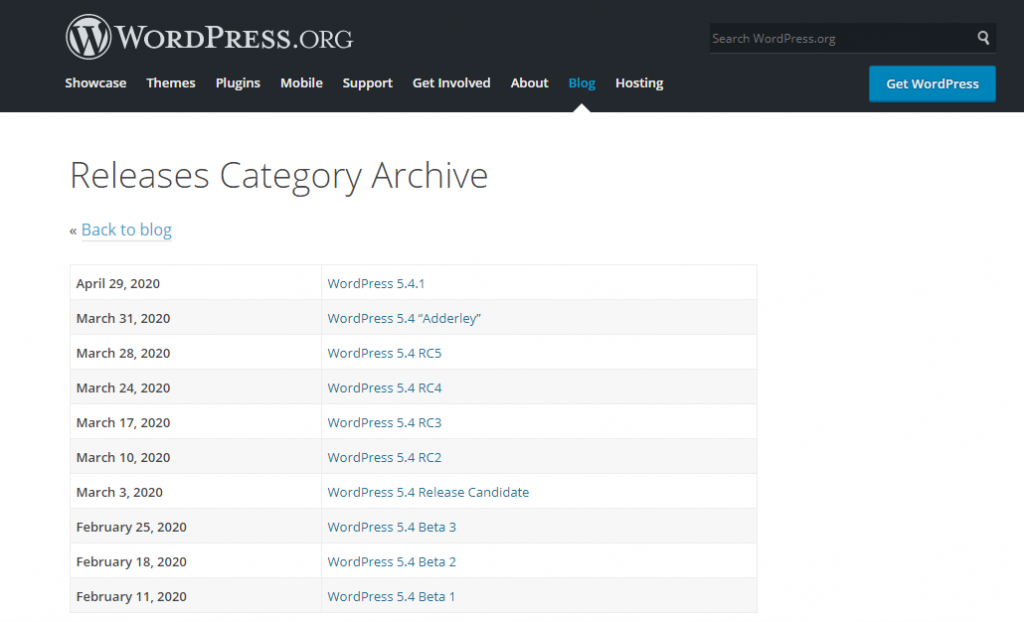

#12 Beware of Malicious Mobile Apps

Mobile apps offer incredible functionality and convenience but can also be a gateway for common online scam tactics. Malicious mobile apps might:

- Contain malware: These apps can steal your personal information and banking credentials or track your online activity.

- Incur hidden charges: Some apps might subscribe you to premium services or in-app purchases without your explicit consent.

- Bombard you with intrusive ads: Malicious apps might display excessive or inappropriate advertisements that disrupt your user experience.

How to Avoid Malicious Mobile App Scams

- Download from Reputable App Stores: Only download apps from official app stores, such as the Google Play Store or Apple App Store, where some security measures are in place.

- Read Reviews and Ratings: Read user reviews and ratings before downloading an app to understand its legitimacy and functionality.

- Check App Permissions: Pay attention to the permissions requested by an app. Be wary of apps requesting access to unnecessary features like your microphone or location data.

READ ALSO: Best VPN Services

Stay Vigilant and Protect Yourself

By familiarizing yourself with common online scam tactics and implementing the security measures mentioned above, you can significantly reduce your risk of falling victim to online deception. Remember, a healthy dose of skepticism and caution is crucial in the digital age.

Don’t hesitate to verify information and research opportunities before committing, and avoid sharing sensitive information readily. By staying vigilant and informed, you can confidently navigate the online world and protect yourself from financial loss and identity theft.

In conclusion, we cannot say that we’d stop using the Internet due to all these stories of scams. We should maintain high social media hygiene when dealing with strangers online.

RELATED POSTS

- How To Report Online Scams In The UK [MUST READ]

- Internet Safety Rules Checklist [MUST READ]

- 6 Most Popular eBay scams

- 16+ Best Free Online Virus Scanners And Removers For 2024

- What is the Next Line of Action after being Scammed Online?

- How to Get Your Money Back From a Scammer on Western Union

- 4 Essential Tactics For Increasing Sales Today