In this post, I will show you how to get unbanned from Omegle like a pro.

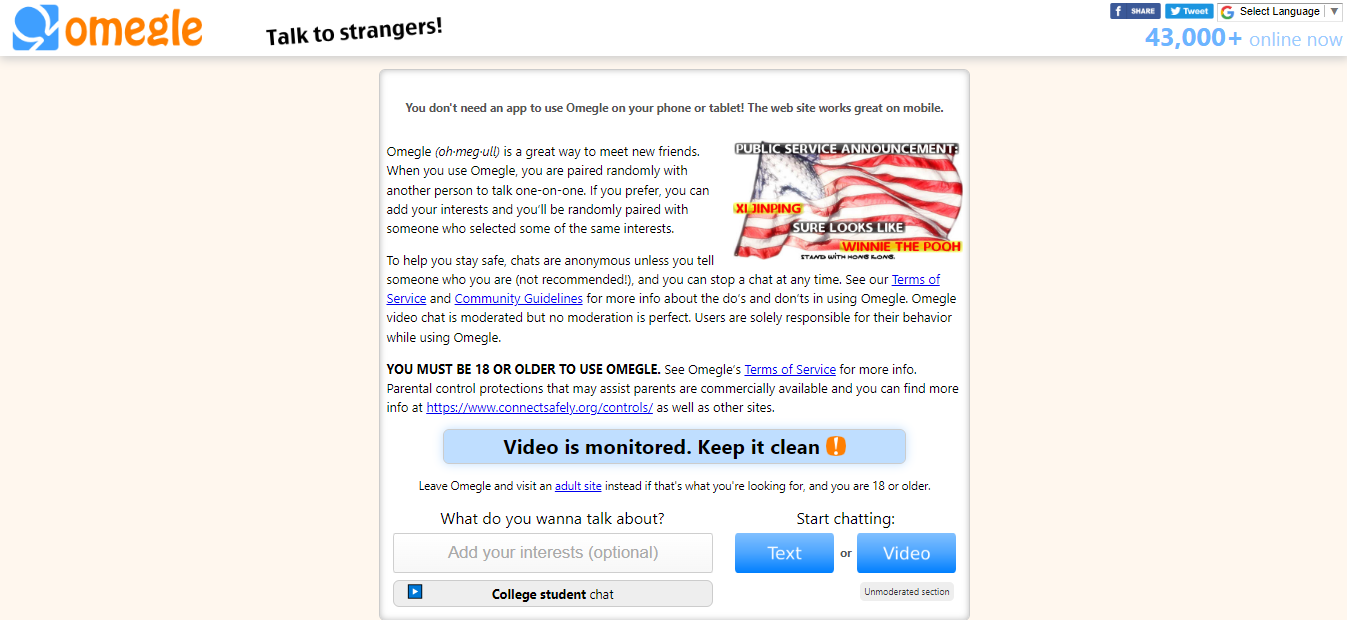

Omegle is an anonymous chatroom platform that allows users to connect with strangers all over the world.

Unfortunately, it is not uncommon for users to be banned for violating the site’s terms of service.

If you have been banned from Omegle and are looking for a way to get unbanned, this step-by-step guide is for you.

In this post, we will discuss the reasons why you might be banned, what measures you can take to get unbanned, and how to avoid being banned in the future.

With a little luck and effort, you’ll be back online in no time. So, if you’re ready to learn how to get unbanned from Omegle, let’s get started!

What Is Omegle?

Omegle is an anonymous chat website that allows you to connect with random people around the world. It was launched in 2009 and has gained a lot of popularity since then.

Omegle is free to use and requires no registration. All you need to do is go to their website and click on ‘start a chat’.

You’ll then be connected to a random person and can start talking. The conversations are completely anonymous, so you can talk about whatever you want without worrying about any personal information being shared.

One of the features of Omegle is the ability to have text, audio, and video chats. This allows you to get to know the person you’re talking to better than if you were talking via text alone.

There are also some safety features in place on Omegle, such as the ability to report users who are being inappropriate or breaking the rules.

You can also disconnect from a chat at any time if you’re feeling uncomfortable or if it’s not going the way you expected it to.

Overall, Omegle is a great way to meet new people and have some interesting conversations. Give it a try and see how you like it!

Reasons Why You Might Be Banned From Omegle

Before we get into how to get unbanned from Omegle, let’s first discuss why you might have been banned in the first place.

There are a few reasons why you might have been banned from using Omegle, including:

- You tried to use Omegle’s live video function without being either over 18 years old or using a webcam with a built-in indicator that you are not underage.

- You were using a fake name or an offensive name.

- You tried to use multiple accounts at once or use an ineffective VPN to change your IP address.

- You’re using a fake webcam or a webcam that is designed to spy on others.

READ ALSO: Proxy Optimization: 4 Things You Didn’t Know A Proxy Could Do

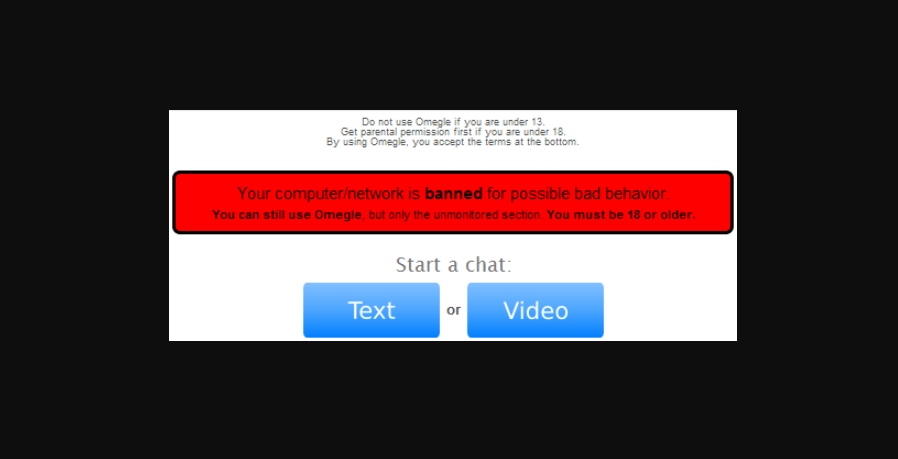

How To Determine If You Have Been Banned On Omegle

If you suspect that you have been banned from Omegle, there are a few ways to determine if this is indeed the case.

While you cannot know for sure unless Omegle actually confirms that you have been banned, there are a few telltale signs that you have been banned on Omegle, including:

- No one is connecting with you during your Omegle session.

- You cannot see any users in your Omegle chat.

- When you try to connect with a user on Omegle, you receive an error message.

If you’ve been banned from Omegle for any reason, don’t worry — you can get unbanned!

All you need to do is wait for your ban to expire. Depending on your offence, this could take anywhere from a few days to several weeks.

With a bit of patience and some luck, you’ll be able to get unbanned from Omegle and start having fun conversations in no time.

READ ALSO: Everything You Must Know About Internet Speed

How To Get Unbanned From Omegle

Now that we’ve discussed what to do if you think you have been banned from Omegle and why, let’s talk about the steps to take if you want to get unbanned from Omegle. .

1. Contact Omegle’s customer service team

If you have been banned from Omegle and are serious about getting unbanned, the first step is to contact Omegle’s customer service team.

You can do this by clicking on the “Report Abuse” link at the top of the page, clicking on the “Contact Us” link at the bottom of the page, or sending an email to [privacy@omegle.com]

You can contact Omegle’s support team and explain why you think you were unfairly banned. The sooner you contact Omegle, the better.

You can also stay off the site and avoid any activities that could get you banned again.

Once you have contacted Omegle, you will need to prove that you are not underage and that you are not using a fake name.

You’ll have a better chance of getting unbanned if you follow the instructions given by Omegle’s customer service team.

Once your ban has expired, you can jump back onto the site and start chatting with new people. It’s a great way to connect with others and exchange ideas, so make sure to take advantage of it!

2. Clear your browser’s cookies and cache

If you have been banned from Omegle, you might be able to get unbanned by clearing your browser’s cookies and cache.

Click on your browser’s settings, select the “privacy” tab or section, and click on the “clear cookies” or “clear cache” button.

Alternatively, you can use a premium tool like CCleaner to clear the cache on your web browser.

3. Change your IP address by using a VPN service

If you have been banned from Omegle, you might be able to get unbanned by changing your IP address.

VPN services are incredibly easy to use and are available for as little as $5 per month.

When choosing a VPN, be sure to select a VPN service that supports Omegle and has servers located in the country where you want to appear to be located.

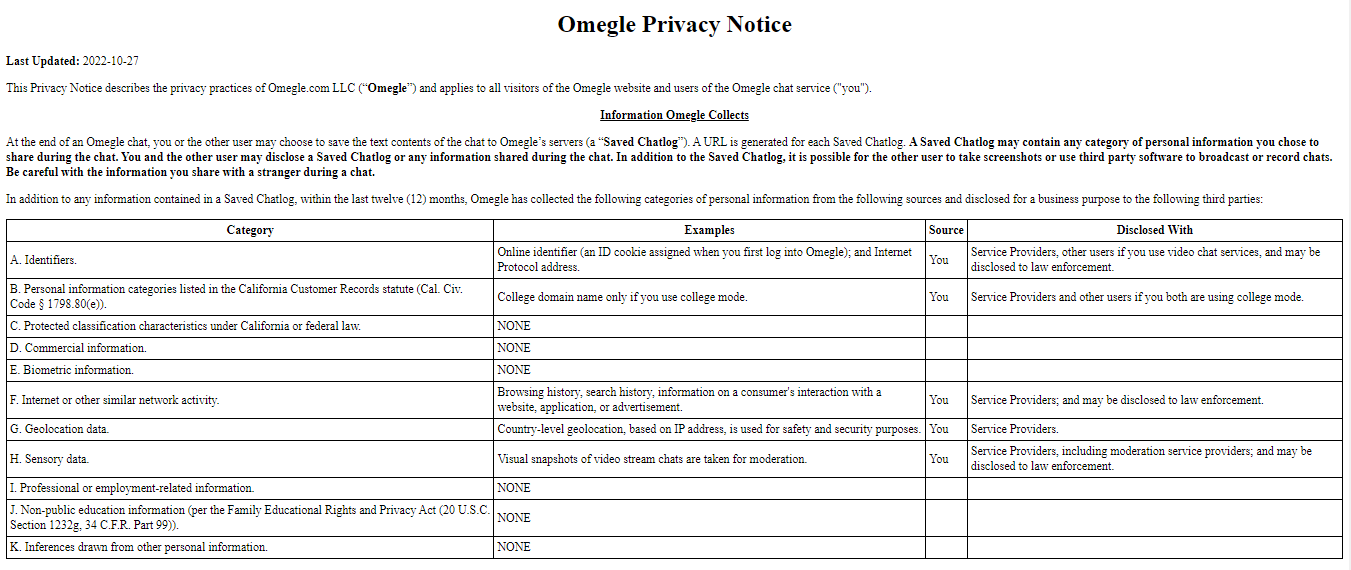

Can You Be Tracked On Omegle?

The short answer is yes, you can be tracked on Omegle. In fact, Omegle has a detailed privacy policy that outlines how your data is collected and used.

To start off, it’s important to note that Omegle does not require you to create an account to use the service.

This means that you can use the service anonymously, but it also means that Omegle does not have any information about you that they can use to track you.

However, Omegle collects and store some basic information about its users. This includes digital information, such as:

- IP addresses

- Device information

- Browser type

- College domain name

- Browsing history

- Search History

- Visual snapshots of video stream chats, etc.

This information is used to prevent abuse and spam on the platform and to improve the overall user experience.

This means that while Omegle cannot track you directly with your personal information, they can still track your activity on the platform. Nevertheless, Omegle can disclose your digital information to service providers and even law enforcement agencies.

This includes things like the conversations you have and the websites you visit while using the platform.

Finally, it’s important to remember that Omegle is not a secure or private platform. While they do have measures in place to protect user data, it’s still possible for malicious actors to access and exploit this data.

As such, it’s important to be aware of the risks associated with using the platform and take steps to protect yourself.

Best VPNs For Omegle [Working & Effective]

Finding the best VPNs for Omegle can be a tricky task since there are so many options out there. That’s why I’ve put together a list of the 10 best VPNs for Omegle that are working.

1. ExpressVPN

ExpressVPN is one of the most reliable and secure VPNs on the market. It offers fast speeds and unlimited bandwidth, both of which are essential for Omegle users. It also has strong encryption and a strict no-logging policy to keep your data secure.

2. NordVPN

NordVPN is another great choice for Omegle users. It has servers in over 60 countries, so you can access Omegle from any corner of the world. It also has impressive speeds, strong encryption, and a no-logging policy.

3. CyberGhost VPN

CyberGhost offers great speeds and unlimited bandwidth, making it ideal for Omegle users. It also offers servers in over 91 countries, so you can access Omegle from anywhere.

4. Private Internet Access

Private Internet Access is one of the most reliable VPNs on the market. Its strong encryption and strict no-logging policy will keep your data secure while using Omegle.

5. IPVanish

IPVanish offers excellent speeds and unlimited bandwidth, making it perfect for Omegle users. It also has servers in over 75 locations across the world and strong encryption to keep your data safe.

6. ProtonVPN

ProtonVPN is another excellent choice for Omegle users because it offers servers in over 67 countries and strong encryption to keep your data safe. Plus, it has fast speeds and unlimited bandwidth for a more enjoyable browsing experience.

7. TunnelBear

TunnelBear offers fast speeds and unlimited bandwidth, so you can use Omegle without any problems. It also has servers in over 47 countries, so you can access Omegle from virtually anywhere.

8. Ivacy VPN

If you’re looking for the best VPN for Omegle, then look no further than Ivacy! This VPN is ideal for unblocking Omegle, with fast speeds and reliable security. It has servers in 70+ locations, ensuring that you can access any content you want.

9. FastestVPN

FastestVPN is a great choice for Omegle users because it offers fast speeds and unlimited bandwidth. It also has servers in over 50 countries and strong encryption to keep your data secure.

10. TorGuard VPN

TorGuard VPN is another great choice for Omegle users. It offers fast speeds and unlimited bandwidth, plus servers in over 60 countries and strong encryption to keep your data secure.

How To Choose The Best VPN For Omegle

Choosing the best VPN for Omegle can be a tricky task, as there are a lot of factors to consider.

First and foremost, you should make sure that the VPN you choose is reliable and secure. No matter how good the features of a particular VPN may be, if it’s not reliable and secure, then it won’t be of any use when accessing Omegle.

The next factor to consider when choosing the best VPN for Omegle is privacy. You want to make sure that the VPN you choose has a strict no-logging policy, as this means that your data won’t be tracked or logged.

It’s also important to make sure that the VPN has strong encryption protocols in place, so that your data is kept safe and secure while you’re on the service.

In addition to reliability and security, it’s also important to look at the speed of the VPN connection. You want to make sure that the VPN you choose has a connection speed that won’t slow down your Omegle activity.

This means looking at download and upload speeds as well as latency, as these factors will all affect your browsing experience.

Finally, you should look at the cost of the Omegle VPN you’re considering. Many VPNs have free versions, but these usually lack features or have limited functionality.

It’s important to make sure that you’re getting all of the features you need for a good Omegle experience for a reasonable price.

By taking into account all of these factors, you should be able to find the best VPN for Omegle that suits your needs and budget.

How To Get Unbanned From Omegle: Frequently Asked Questions

Which VPN Works For Omegle?

The best working VPN services for Omegle are ExpressVPN, NordVPN, CyberGhost VPN, PIA VPN, IPVanish, ProtonVPN, TunnelBear, and VyprVPN.

Omegle is a web-based chat service that allows users to chat with strangers in a randomized order. It’s a popular platform for many people, but it can also be blocked by certain countries and internet providers.

Take, for instance, Omegle is blocked in the UAE, Turkey, China, Qatar, Pakistan, and Turkey.

Using a VPN can help you bypass these blocks and access Omegle from anywhere in the world. It does this by routing your internet connection through a remote server, which masks your IP address and makes it look like you’re browsing from a different location.

When it comes to finding the right VPN for Omegle, you’ll want to look for one that offers fast speeds and a reliable connection.

You’ll also want to make sure the VPN has plenty of servers located around the world so you can always find a secure connection.

In terms of specific services, I recommend checking out ExpressVPN. They offer great speeds and security, plus they have servers in over 90 countries, so you can always find a reliable connection.

Not to mention, they offer a 30-day money-back guarantee in case you’re not satisfied with their service.

Why Does Omegle Ban People?

Omegle is a chat platform that allows people to connect with random strangers from all around the world.

Unfortunately, it can also be a place where people can misbehave or act inappropriately. As such, Omegle has a set of rules that all users must follow. If a user is found to be breaking these rules, they can be banned from using Omegle.

The most common reason for a user being banned is for inappropriate behaviour. This can include using offensive language, harassing other users, or even spamming the chat with unwanted messages.

Omegle also takes a strong stance against users who try to solicit personal information from others or use the platform to distribute malicious software.

If you believe you have been unfairly banned from Omegle, you can appeal the ban directly to the company.

Omegle will investigate the issue and may even reinstate your account if they determine that your ban was unjustified.

Who Uses Omegle?

Omegle is an anonymous chat site that is used by people all over the world. It’s a great way to meet new people and start up conversations. So, who uses Omegle?

The answer is pretty much anyone! Omegle is popular with teenagers, college students, and young adults who are looking to make new friends and explore new interests.

It’s also used by people of all ages, backgrounds, and interests – so you never know who you might find there!

Omegle is especially useful for those who are shy or introverted, as it allows them to safely chat with strangers without having to worry about face-to-face interactions.

You can also use Omegle to practice your language skills, as you can chat with native speakers from all over the world.

No matter why you’re using Omegle, it’s important to remember to stay safe. Don’t share any personal information like your full name, address, or phone number.

Also, avoid sending any inappropriate content or engaging in inappropriate conversations. Be sure to follow the site’s rules to ensure that you have a safe and enjoyable experience.

Is Omegle Safe?

Good question – is Omegle safe? The short answer is no: Omegle is not safe for anyone to use.

Omegle is a website that allows users to chat anonymously with strangers. There’s no sign-up process so that anyone can use it, and this makes it particularly dangerous for young people.

It’s also not moderated, meaning that there’s no way to ensure that the people you’re chatting with are who they say they are.

There have been numerous reports of people being targeted by predators on Omegle.

Predators can use the website to find vulnerable people, and because the website isn’t moderated, they can do so without fear of being caught.

This makes it incredibly unsafe for anyone to use, especially young people who might not be aware of the dangers of talking to strangers online.

In addition, Omegle can be used to spread malicious content, such as malware and viruses. This can be very dangerous, as it can lead to identity theft or other forms of cybercrime.

All in all, Omegle is definitely not a safe website for anyone to use.

It’s important to be aware of the risks associated with anonymous chatting websites, and if you or someone you know is using Omegle, it’s important to be extra cautious.

Can You Use a Free VPN For Omegle?

Yes, you can use a free VPN for Omegle. A Virtual Private Network (VPN) acts as an intermediary between your computer and the internet.

It encrypts your data and helps protect your online activities from being seen by others. By using a VPN, you can access Omegle from any location, even if it is blocked in your region.

Using a free VPN for Omegle has its pros and cons. On one hand, it is free and can be used to access Omegle from any location.

On the other hand, free VPNs usually have limited data and bandwidth, which means that you may experience slow speeds or even connection drops.

Additionally, free VPNs often have fewer server locations and weaker encryption than paid VPNs.

If you are looking for a reliable, secure, and fast VPN service for Omegle, then I would recommend using a premium VPN service instead of a free VPN service.

Premium VPNs offer more features, such as unlimited data and bandwidth, faster speeds, and better encryption. They also have more server locations around the world, so you can access Omegle from anywhere.

No matter what type of VPN service you choose, make sure that it is reliable and secure. I would also recommend reading reviews to find out which VPNs offer the best features and performance for Omegle.

How To Avoid Being Banned On Omegle In The Future

Now that we’ve discussed what to do if you want to be unbanned from Omegle, let’s talk about how you can avoid being banned in the first place.

If you want to avoid being banned from Omegle, there are a few things you can do to stay on the right side of the site’s terms of service, including:

- Avoid using a fake name.

- Avoid using a webcam that is designed to spy on others.

- Don’t share illicit files.

- Avoid using a webcam with a built-in indicator that you are underage.

- Don’t use obscene usernames

- Avoid using multiple accounts at once.

Best Omegle Alternatives

If you’re looking for some great alternatives to Omegle, you’ve come to the right place!

Omegle is a great way to meet and chat with strangers online, but there are some other awesome options out there, too.

1. Chatroulette

One of the most popular Omegle alternatives is Chatroulette. This site allows you to connect with people from all over the world in a fun and interactive way.

You can choose to chat with either a randomly selected person or with someone who has similar interests as you.

Chatroulette also has a lot of features that make it a great option, such as being able to filter out people based on their gender, age, and interests.

2. Skype

Another great alternative to Omegle is Skype. This app allows you to connect with people from anywhere in the world.

You can use the video chat feature to have conversations with friends or family, or you can use the voice call feature to talk with anyone. It also has a lot of great features like file sharing and group chats.

3. Tinychat

If you’re looking for a more laid back Omegle alternative, you might want to try out Tinychat. This site is designed for users who want to stay anonymous while talking with others.

You can create your own private room and invite people to join it, or join other rooms that are already active. It’s also a great way to meet people from all around the world and make new friends.

4. Google Hangouts

Finally, there is Google Hangouts. This Google app allows you to connect with people from all over the world easily and conveniently.

You can use the video chat feature or just text chat if you prefer. Like Omegle, it also has a lot of features like group chats, file sharing, and more.

Conclusion

If you have been banned from Omegle, there is a chance that you can get unbanned by clearing your browser’s cookies and cache, using a VPN service, and/or contacting Omegle’s customer service team.

In conclusion, if you want to know how to get unbanned from Omegle or avoid being banned in the first place, follow the tips in this guide.

Nonetheless, I will recommend that you use a working VPN for Omegle, like ExpressVPN, NordVPN, CyberGhost VPN, PIA VPN, IPVanish, ProtonVPN, and TunnelBear.

EDITOR’S PICKS: